Home

Home

Latest ClickHouse Newsletter: Better Joins, Open Data Lakes, and Vector Search

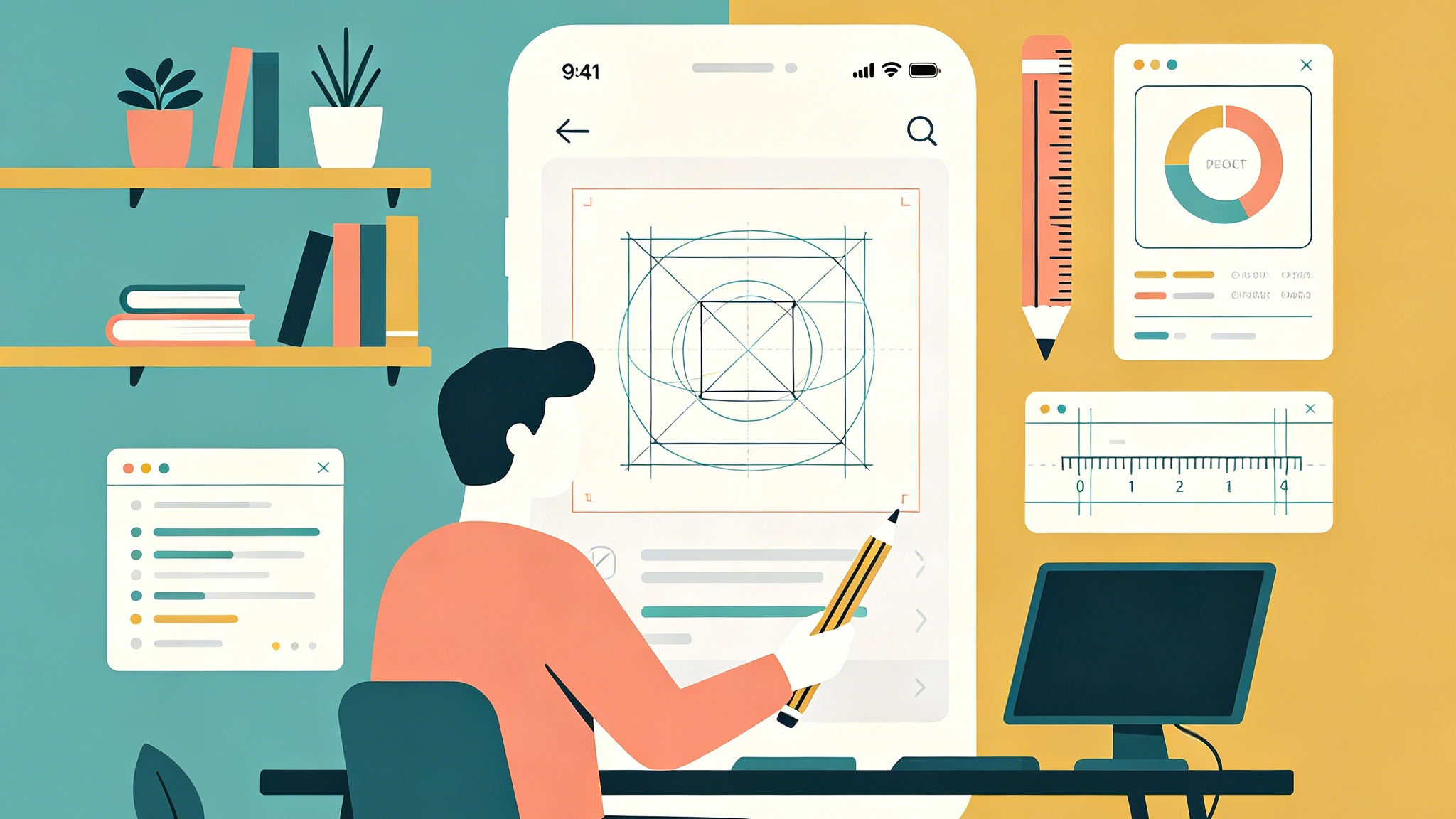

Real-time analytics is no longer just about fast scans on local disks. As we move through 2026, the landscape of data engineering has shifted toward a more integrated, open, and intelligent ecosystem. This edition of our technical deep-dive explores how recent engine updates have transformed the way we handle massive joins, manage semi-structured data, and integrate with the broader open-source data lakehouse standards.

The Evolution of Query Planning and Joins

For a long time, the advice for ClickHouse users was to avoid large joins whenever possible, often favoring wide, denormalized tables. While denormalization remains a powerful strategy for performance, the reality of modern data workloads demands flexible relational capabilities. Recent updates in the 25.x and 26.x release cycles have fundamentally changed the economics of joining data in ClickHouse.

Parallel Hash Join and the dpsize Algorithm

The move toward making the parallel_hash join the default strategy marked a significant milestone. This allows the engine to utilize multiple threads for building the hash table, drastically reducing the wall-clock time for large-scale joins. However, the real intelligence lies in the newer experimental query planning algorithms, such as dpsize.

In complex analytical queries involving multiple inner joins, the order of execution can mean the difference between a sub-second response and an out-of-memory (OOM) error. The dpsize algorithm helps the query planner choose more efficient join orders by estimating the cost and cardinality of intermediate results. This reduces the burden on data engineers to manually order their tables in the FROM clause, making the system more predictable under heavy concurrency.

Runtime Filters for Anti-Joins

One of the more nuanced but impactful performance gains comes from the support of join runtime filters for anti-joins. In scenarios where you need to find records that do not exist in another set (a common pattern in churn analysis or fraud detection), runtime filters allow the engine to prune data early in the scan phase. By pushing down these filters, ClickHouse avoids scanning irrelevant data parts, significantly lowering I/O overhead.

Breaking the Silos: ClickHouse as a Lakehouse

The boundary between "fast analytics" and "data lakes" has effectively dissolved. The native integration with Apache Iceberg and Delta Lake has matured from a simple read-only experiment into a production-grade feature set.

Iceberg REST Catalog and Schema Evolution

Support for the Iceberg REST catalog allows ClickHouse to act as a first-class citizen in the modern data stack. You can now query Iceberg tables with the same ease as local MergeTree tables, but with the added benefit of unified metadata management.

More importantly, schema evolution is now handled gracefully. In the past, changing a column type in your data lake could break downstream analytics. Now, ClickHouse automatically recognizes these changes via the catalog, ensuring that pipelines remain resilient. This is particularly valuable for teams running a Medallion architecture, where data flows from raw "Bronze" layers into refined "Gold" layers.

S3 and Azure Queue Enhancements

Cloud-native storage is the backbone of modern scalability. Recent optimizations have focused on reducing the latency of querying S3 buckets. Feature additions like path filter pushdown and the ability to trigger move actions after processing files have simplified the "ingest-then-analyze" workflow. For teams running massive ingestion pipelines, these improvements reduce the reliance on external orchestration tools, as ClickHouse can now manage more of the file-level lifecycle internally.

The JSON Revolution: Beyond Strings and Blobs

Handling semi-structured data has historically been a trade-off between the flexibility of a document store and the performance of a columnar database. The "new" JSON data type, introduced and refined over the past year, aims to provide the best of both worlds.

Under the Hood of the JSON Type

Unlike the old approach of storing JSON as a simple string or a nested map, the current implementation dynamically flattens JSON paths into subcolumns. This means that if you frequently query a specific field within a large JSON blob, ClickHouse only reads the data for that specific field from the disk.

One of the biggest technical challenges solved was the "column avalanche" problem. In previous iterations, a JSON object with thousands of unique keys could create thousands of small files on disk, killing filesystem performance. The current engine uses a more sophisticated storage format that clusters related subcolumns, preventing the file count from exploding while maintaining columnar read efficiency.

Type Casting and Nullability

Modern JSON workloads often involve inconsistent data types—for example, a field that is an integer in one record but a string in another. The engine now handles these variations with implicit type casting and sophisticated nullability tracking. This allows for "schema-on-read" flexibility without the catastrophic performance penalties usually associated with it.

Vector Search and AI Integration

As generative AI and large language models (LLMs) continue to dominate the tech landscape, the demand for vector search within the database has grown. ClickHouse has evolved to support these workloads through the Qbit data type and specialized vector similarity indexes.

SIMD-Accelerated Vector Operations

Performance in vector search is all about calculating the distance between high-dimensional embeddings. By leveraging SIMD (Single Instruction, Multiple Data) instructions, ClickHouse can perform L2 distance and cosine similarity calculations at lightning speeds. Recent updates have fixed several correctness issues with non-constant reference vectors, making the system more robust for real-time recommendation engines and similarity search applications.

Vector Index Lifecycle

Operational reliability is key for vector workloads. New mechanisms ensure that vector index cache entries are cleaned up immediately when table parts are dropped or merged. This prevents memory leaks and ensures that the system's resource usage remains stable even as data is constantly being updated or rotated.

Architectural Patterns: The Medallion Approach

We are seeing more organizations adopt the Medallion architecture directly within ClickHouse. This pattern organizes data into three logical tiers:

- Bronze: Raw data ingested from sources like Kafka, S3, or PostgreSQL (via CDC). This layer preserves the original state, often using the JSON data type for maximum flexibility.

- Silver: Data that has been cleaned, deduplicated, and normalized. Here,

ReplacingMergeTreeis frequently used to handle updates, andMaterialized Viewsperform initial aggregations. - Gold: Highly optimized, aggregated tables ready for consumption by BI tools and dashboards. This layer often utilizes

Projectionfeatures to provide multiple sort orders for the same dataset.

Applying this architecture to real-time data—like BlueSky social network feeds or high-frequency financial ticks—allows teams to maintain a clear data lineage while achieving sub-second query performance on the final "Gold" tables.

Practical Query Optimization Strategies

Even with a powerful engine, query performance often comes down to how you structure your schema and write your SQL. Here are several updated strategies for 2026:

Using Skip Indexes Effectively

Skip indexes (like minmax, set, and bloom_filter) are essential for avoiding full table scans on non-primary key columns. Recent engine improvements have made these indexes much smarter when dealing with mixed AND/OR conditions in WHERE clauses. If you are querying large tables with diverse filters, ensure your skip indexes are tuned to the granularity of your data parts.

The Power of Dictionaries

Dictionaries are a highly efficient way to handle join-like operations for reference data. By keeping mapping data (like product IDs to names) in memory, you can simplify your queries and gain significant performance over standard joins. The ability to source dictionary data from external databases or S3 with automatic refresh makes them a versatile tool for data enrichment.

Materialized Views vs. Refreshable MVs

Standard Materialized Views are triggered on insert, making them ideal for real-time streaming data. However, for more complex transformations that involve looking at the entire state of a table, Refreshable Materialized Views are now the preferred choice. They allow you to define a refresh schedule (e.g., every minute or every hour), providing a middle ground between real-time streaming and traditional batch processing.

Deployment Trends: BYOC and Compute Separation

In the cloud space, the trend is moving toward Bring Your Own Cloud (BYOC) and the separation of compute and storage. This architecture allows organizations to keep their data within their own security perimeter while leveraging the scaling capabilities of a managed service.

Compute-Compute Separation

One of the most requested features has been the ability to separate different types of workloads onto different compute nodes. For example, you can have a high-performance cluster of nodes dedicated to data ingestion (Write nodes) and a separate, independently scalable cluster for user-facing dashboards (Read nodes). This prevents a massive data load from impacting the latency of critical business reports.

Conclusion

ClickHouse has transformed from a niche tool for log analysis into a comprehensive platform for real-time analytics, vector search, and open lakehouse integration. The focus has shifted from mere speed to "speed with stability." Whether you are building an observability platform, a financial trading system, or a product analytics suite, the current engine provides the tools necessary to handle scale without the operational complexity of the past.

As we look forward, the emphasis remains on making the database more intuitive. With improvements in automatic join ordering, smarter JSON handling, and tighter integration with the global data ecosystem, the barrier to entry for high-performance analytics continues to fall. The key is to understand these new primitives—like the Medallion architecture and specialized vector types—and apply them to your specific data challenges.